ShapBPT: Image feature attributions using Data-Aware Binary Partition Trees

Date:

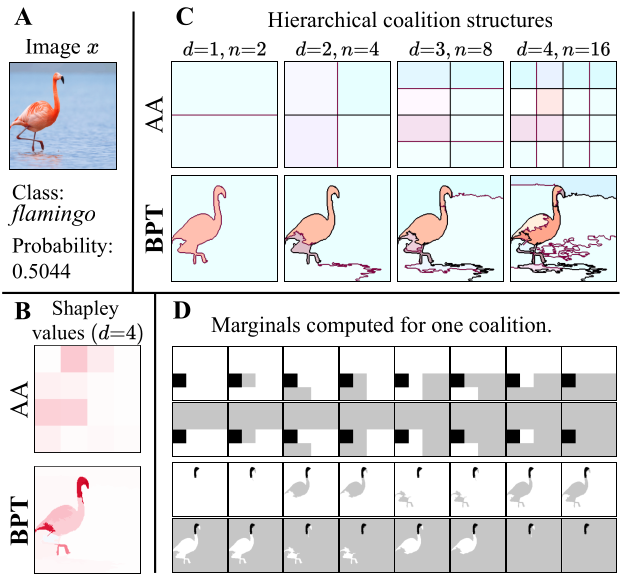

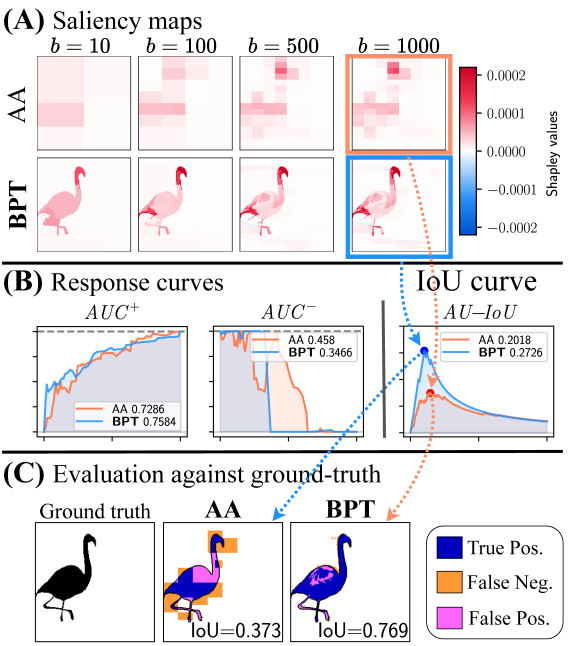

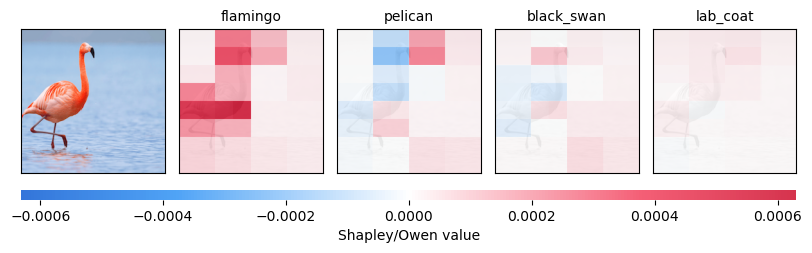

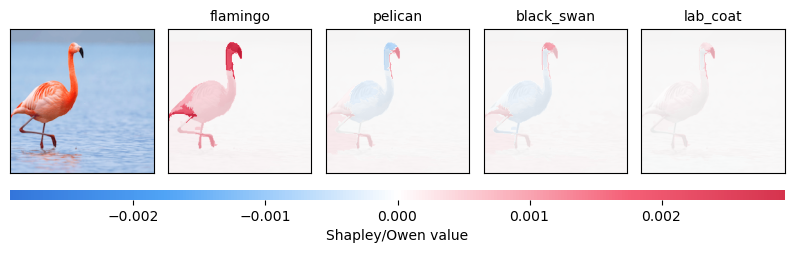

Pixel-level feature attributions play a key role in Explainable Computer Vision (XCV) by revealing how visual features influence model predictions. While hierarchical Shapley methods based on the Owen formula offer a principled explanation framework, existing approaches overlook the multiscale and morphological structure of images, resulting in inefficient computation and weak semantic alignment.

To bridge this gap, we introduce ShapBPT, a data-aware XCV method that integrates hierarchical Shapley values with a Binary Partition Tree (BPT) representation of images. By assigning Shapley coefficients directly to a multiscale, image-adaptive hierarchy, ShapBPT produces explanations that align naturally with intrinsic image structures while significantly reducing computational cost. Experimental results demonstrate improved efficiency and structural faithfulness compared to existing XCV methods, and a 20-subject user study confirms that ShapBPT explanations are consistently preferred by humans.

Links:

- Main Technical Track: ShapBPT for improved Image Feature Attributions using Binary Partition Trees

- The method is available under: https://github.com/amparore/shap_bpt.

- Conference: AAAI-2026 (40th Annual AAAI Conference on Artificial Intelligence)

- Link to talk: https://aaai.org/wp-content/uploads/2026/01/Main-track-poster-presentations-1.pdf

- Python Package: https://pypi.org/project/shap-bpt/ -

pip install shap-bpt - Poster in PDF: https://rashidrao-pk.github.io/files/AAAI_26_poster.pdf

- PDF on ArXiv, Technical Appendix.

- https://github.com/rashidrao-pk/shap_bpt_tests

How it Works?

—

—  —

—  —

—  —

—