Object Detection and Classification Based on Feature Fusion and Deep Convolutional Neural Network

Published in COMSATS University Islamabad, Wah Campus, 2019

Master of Science in Computer Science

COMSATS University Islamabad, Wah Campus, Pakistan

Candidate: Muhammad Rashid

Registration No.: CIIT/SP17-RCS-009/Wah

Supervisor: Dr. Muhammad Sharif

Department: Computer Science

Session: Fall 2018

💻 GitHub Profile 🎓 Publications

Thesis Overview

Thesis Statistics

- 📘 5 Main Chapters

- 🧠 Deep CNN + Handcrafted Feature Fusion

- 🔍 Object Detection and Classification

- 🧩 SIFT Point Features

- 🖼️ AlexNet and VGG-19 Deep Features

- 📉 Rényi Entropy-Based Feature Selection

- 🧪 Evaluated on 5 Public Datasets

- 🏆 Reported up to 100% classification accuracy on the Birds dataset

Abstract

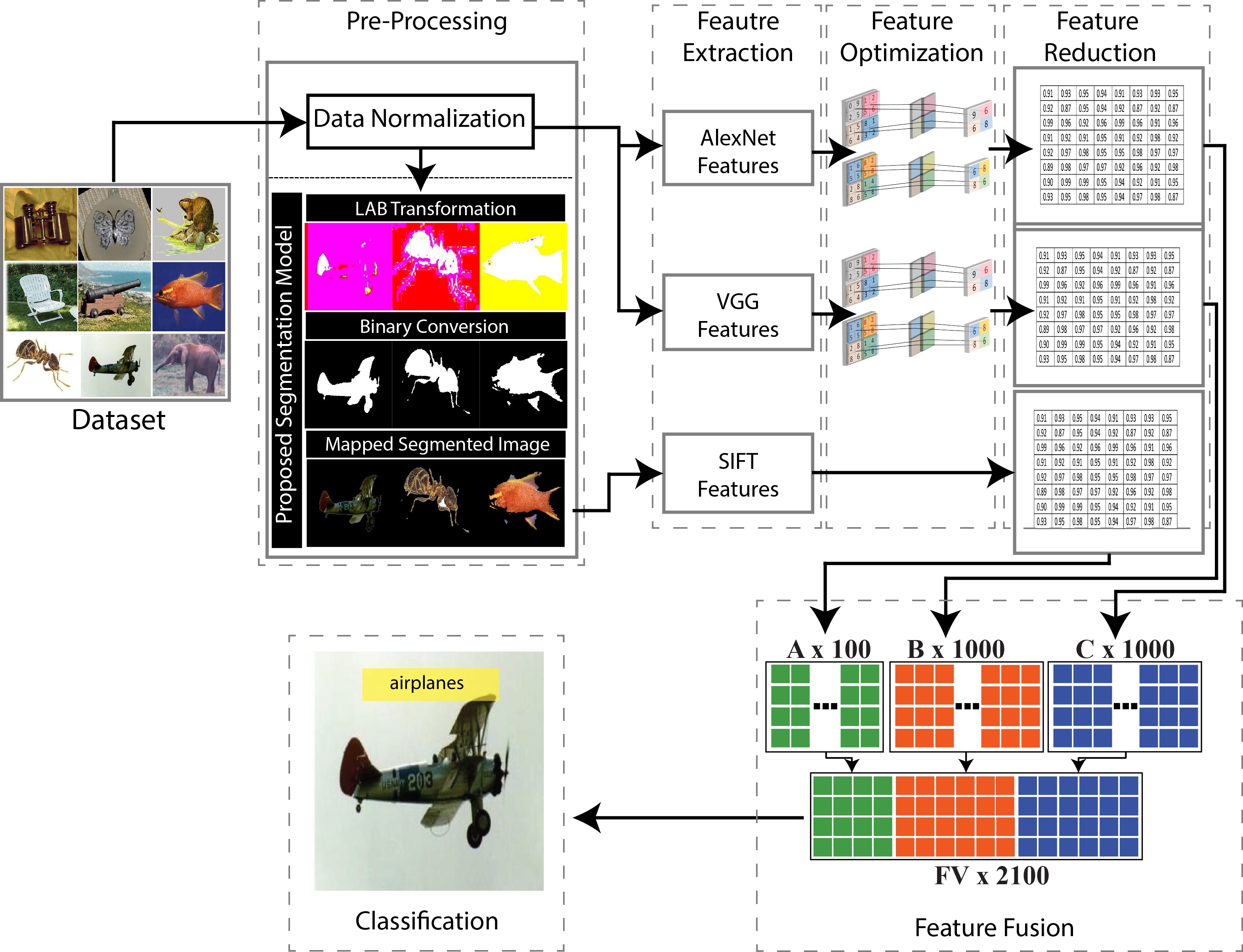

Object detection and classification are challenging tasks in computer vision and machine learning due to their broad applications in video surveillance, pedestrian detection, object recognition, and image understanding. This thesis proposes a deep learning-based object detection and classification framework that combines handcrafted SIFT point features with deep convolutional neural network features.

The proposed method first applies an improved saliency-based segmentation method to identify prominent object regions. SIFT point features are extracted from the segmented object regions, while deep CNN features are extracted from two pre-trained convolutional neural networks: AlexNet and VGG-19. Feature activations are obtained from fully connected layers and refined using max pooling to reduce noise and redundancy.

To improve feature compactness and classification performance, a Rényi entropy-controlled feature selection method is applied. The selected SIFT and deep CNN features are fused using a serial feature fusion strategy and passed to an ensemble classifier for final classification.

The proposed method was evaluated on five publicly available datasets: Caltech-101, Pascal 3D+, Barkley 3D, Birds, and Butterflies. The reported classification accuracies were 93.8%, 88.6%, 99.7%, 100%, and 98.0%, respectively.

1. Introduction

Object detection and classification are central problems in computer vision. They aim to identify objects in images and assign them to their correct categories. These tasks are widely used in surveillance, pedestrian detection, target recognition, face detection, optical character recognition, and automated image analysis.

Traditional object classification methods rely on handcrafted descriptors such as SIFT, HOG, SURF, texture, color, and shape features. Although these descriptors are useful, their performance may degrade under complex backgrounds, object similarity, illumination variations, and large intra-class variation.

Deep convolutional neural networks have significantly improved visual recognition performance by automatically learning hierarchical visual representations. However, a single CNN model may not capture all relevant local and global object characteristics. This thesis therefore investigates the fusion of handcrafted and deep CNN features for improved object detection and classification.

Research Motivation

The motivation of this thesis is to improve object classification accuracy by combining complementary visual features. Handcrafted features such as SIFT capture local keypoint information, while deep CNN models capture high-level semantic representations.

The thesis focuses on the following motivations:

- Improving classification performance under complex backgrounds

- Combining local handcrafted descriptors with deep CNN features

- Reducing redundant and noisy features

- Improving computational efficiency through feature selection

- Evaluating the proposed method on multiple public datasets

Problem Statement

Object detection and classification systems face several challenges:

- Noise, distortion, and illumination variations can reduce classification accuracy.

- Irrelevant and redundant features increase computational cost.

- Feature selection is required to identify the most discriminative descriptors.

- Feature fusion is challenging when descriptors have different dimensions.

- Classification becomes harder when datasets contain many object classes and complex backgrounds.

This thesis addresses these challenges by proposing a feature fusion and feature selection framework based on SIFT features, AlexNet features, VGG-19 features, Rényi entropy-based feature selection, and ensemble classification.

Main Contributions

The main contributions of this thesis are:

An improved saliency-based preprocessing method for object region extraction.

Extraction of SIFT point features from segmented and mapped RGB object regions.

Extraction of deep CNN features from pre-trained AlexNet and VGG-19 models.

Application of max pooling to reduce noise in deep CNN feature vectors.

Use of Rényi entropy-controlled feature selection to select the most relevant features.

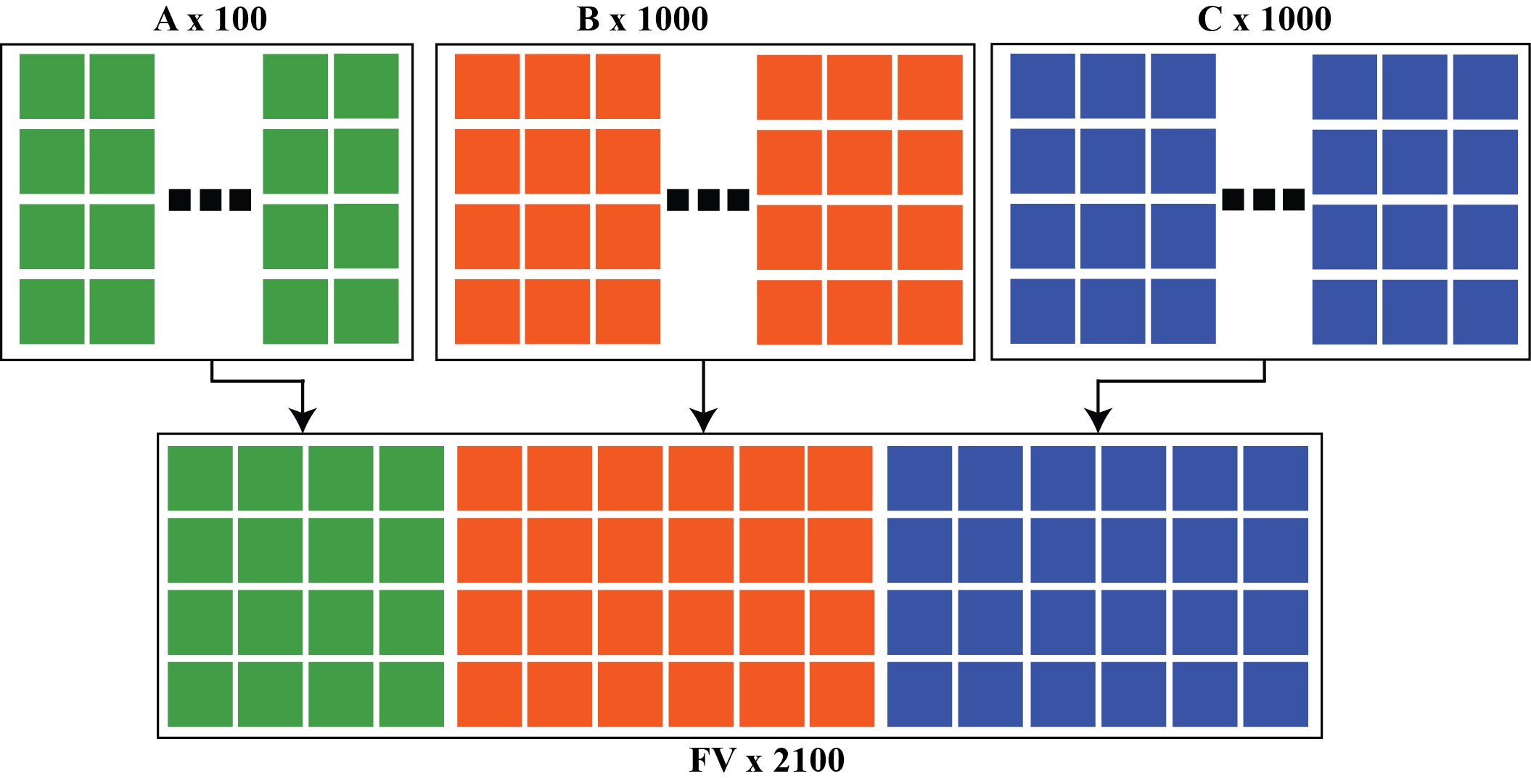

Serial fusion of selected SIFT and deep CNN features into a unified feature vector.

Classification using ensemble classifiers and comparison with SVM, KNN, decision tree, and other supervised classifiers.

Proposed Methodology

The proposed framework follows a multi-stage object detection and classification pipeline.

1. Improved Saliency-Based Segmentation

The input image is first transformed into LAB color space. An improved saliency method is then applied to segment the most prominent object region from the image. The segmented object is mapped back to RGB space for feature extraction.

2. SIFT Point Feature Extraction

SIFT features are extracted from the segmented object region. These features capture local keypoints and are robust to scale, rotation, and local appearance changes.

ms_alexms

3. Deep CNN Feature Extraction

Two pre-trained CNN models are used:

- AlexNet

- VGG-19

Deep features are extracted from fully connected layers. Each model provides a high-dimensional feature representation, which is later refined using max pooling.

4. Max Pooling

Max pooling is applied to reduce noise and compact the extracted CNN feature vectors.

5. Rényi Entropy-Based Feature Selection

Rényi entropy is used to measure the importance and randomness of extracted features. The most informative features are selected from each feature space.

6. Feature Fusion

Selected AlexNet, VGG-19, and SIFT features are fused using a serial feature fusion strategy.

7. Classification

The fused feature vector is passed to supervised classifiers. The best performance is reported using an ensemble classifier.

Datasets

The proposed method was evaluated on five public datasets:

| Dataset | Classes | Total Images |

|---|---|---|

| Caltech-101 | 101 | 9144 |

| Pascal 3D+ | 12 | 22394 |

| Barkley 3D | 10 | 6604 |

| Birds | 6 | 600 |

| Butterflies | 7 | 619 |

Experimental Results

The proposed method achieved strong classification performance across all evaluated datasets.

| Dataset | Best Reported Accuracy |

|---|---|

| Caltech-101 | 93.8% |

| Pascal 3D+ | 88.6% |

| Barkley 3D | 99.7% |

| Birds | 100% |

| Butterflies | 98.0% |

The results show that fusing handcrafted SIFT features with deep CNN features improves classification performance compared to using individual feature descriptors alone.

Publications from this Thesis

The research conducted during this Master’s thesis resulted in the following peer-reviewed journal publications.

1. Object Detection and Classification: A Joint Selection and Fusion Strategy of Deep Convolutional Neural Network and SIFT Point Features

Published in: Multimedia Tools and Applications (Springer), 2018

This work proposed a hybrid object detection and classification framework that combines deep CNN representations with handcrafted SIFT point features. The method introduced a joint feature selection and feature fusion strategy to improve classification performance across multiple benchmark datasets.

Main Contributions

- Hybrid CNN + SIFT feature extraction

- Joint feature selection and fusion

- Improved object classification accuracy

- Integration of handcrafted and deep representations

Keywords

CNN SIFT Feature Fusion Object Detection Computer Vision

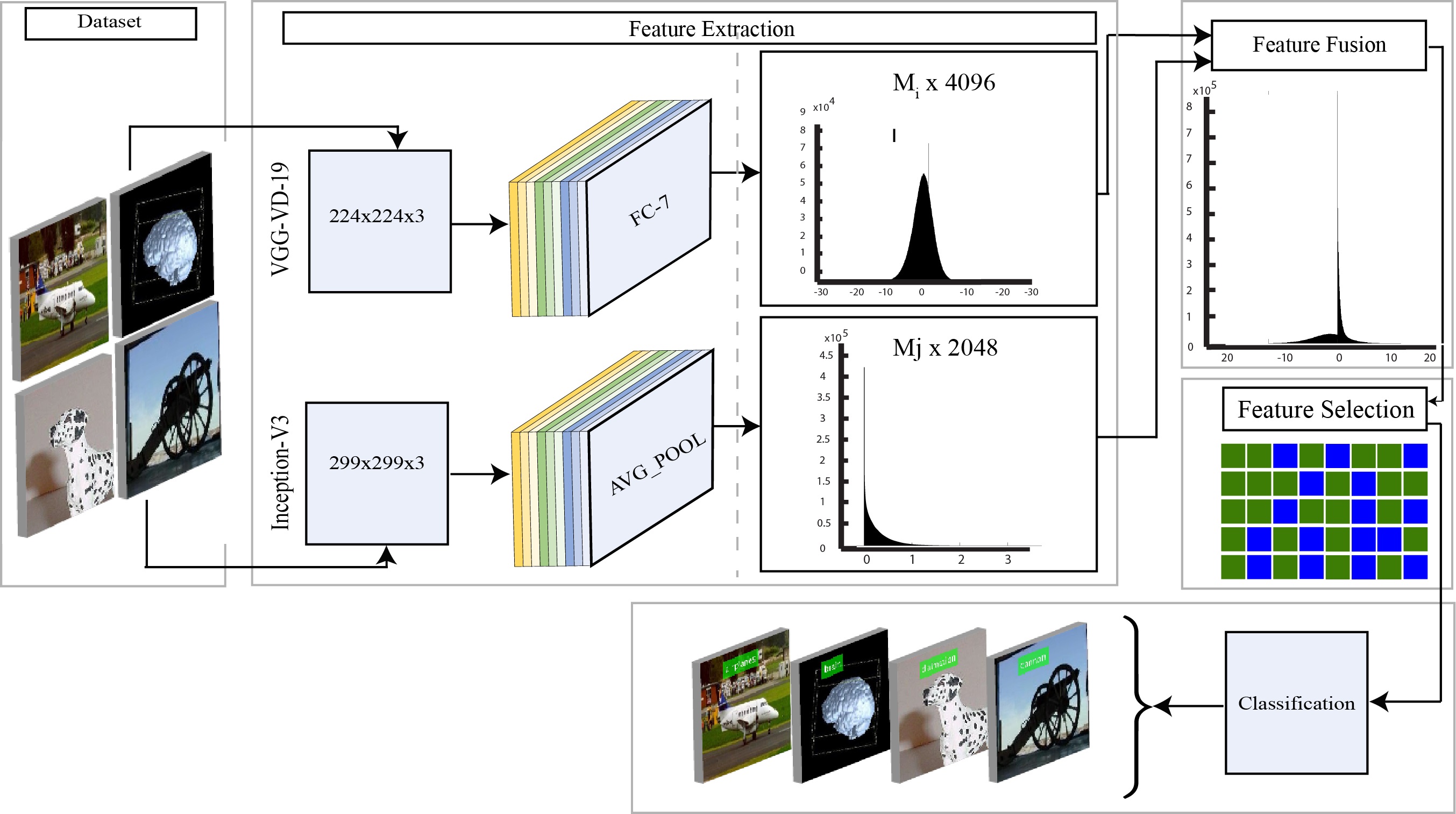

2. A Sustainable Deep Learning Framework for Object Recognition Using Multi-Layers Deep Features Fusion and Selection

Published in: Sustainability (MDPI), 2020

This work proposed a sustainable deep learning framework for object recognition using transfer learning, multi-layer deep feature extraction, feature fusion, and entropy-controlled feature selection.

The framework combined VGG19 and InceptionV3 deep representations using Parallel Maximum Covariance (PMC) fusion and selected discriminative features through Multi Logistic Regression controlled Entropy-Variances (MRcEV).

Main Contributions

- Transfer learning using VGG19 and InceptionV3

- Multi-layer deep feature extraction

- Parallel Maximum Covariance (PMC) fusion

- MRcEV feature selection

- Evaluation on multiple object recognition datasets

Datasets

- Caltech-101

- Birds

- Butterflies

- CIFAR-100

Keywords

Transfer Learning VGG19 InceptionV3 Feature Selection Object Recognition

Conclusion

This thesis proposed an object detection and classification framework based on feature fusion and deep convolutional neural networks. The method integrates improved saliency-based segmentation, SIFT point features, AlexNet and VGG-19 deep features, Rényi entropy-based feature selection, and ensemble classification.

Experimental results on five public datasets demonstrate that the proposed approach improves classification accuracy and provides competitive performance compared to existing techniques. The work highlights the value of combining handcrafted local descriptors with deep CNN representations for robust object classification.

Future work may extend this approach to real-time object detection, multiple object classification, and more advanced deep learning architectures.

Citation

@mastersthesis{rashid2019objectdetection,

title = {Object Detection and Classification Based on Feature Fusion and Deep Convolutional Neural Network},

author = {Rashid, Muhammad},

school = {COMSATS University Islamabad, Wah Campus},

type = {MS Thesis},

year = {2019}

}