ShapBPT in Perspective: A Consolidated Review and an eXplainable Anomaly Detection Case Study

Published in QualITA Workshop | ICPE 2026 (ACM), 2026

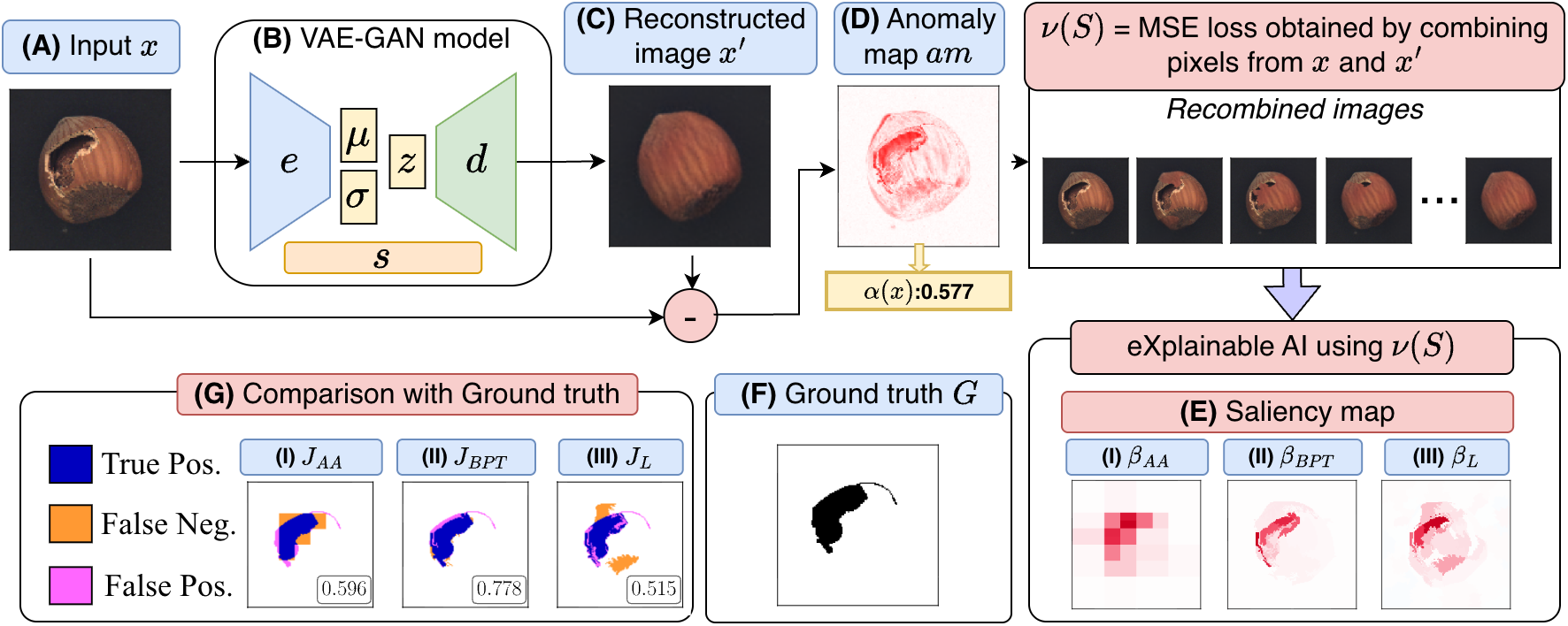

--- Workflow of Explainable Anomaly Detection using ShapBPT

--- Workflow of Explainable Anomaly Detection using ShapBPT

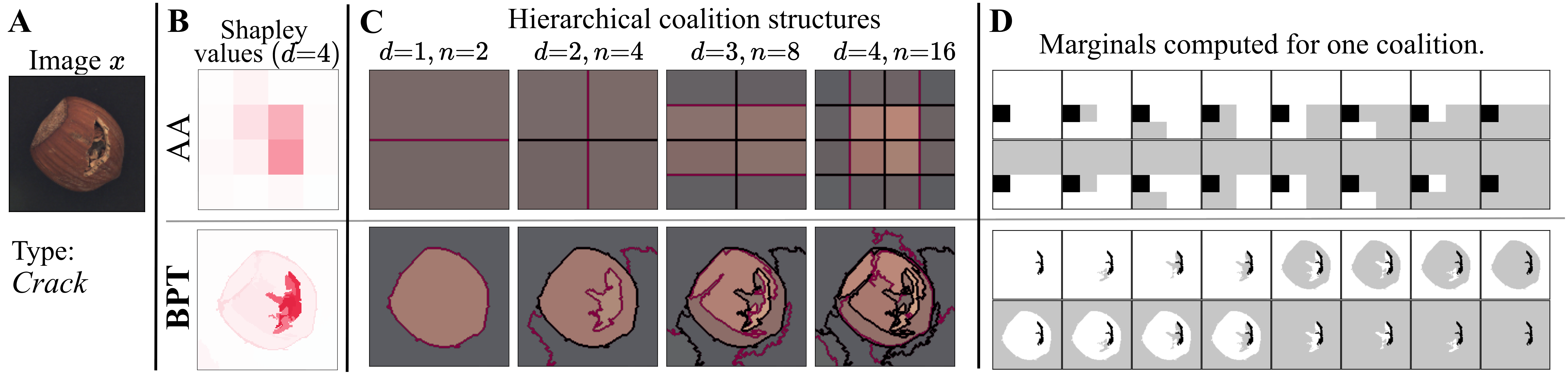

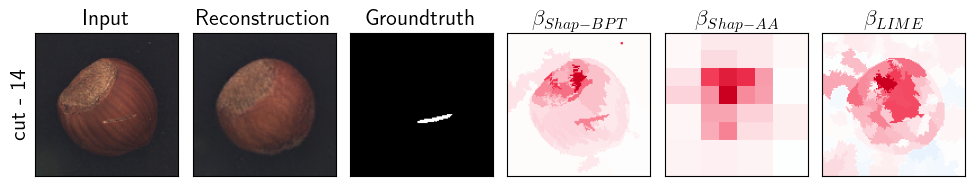

The method explains anomaly detection decisions by assigning attribution scores to image regions. Instead of relying on fixed geometric partitions, ShapBPT uses a **data-aware image hierarchy** based on Binary Partition Trees. This allows the explanation to follow meaningful visual structures in the image. Datasets and Models === * **Task**: Explainable Anomaly Detection * **Method**: ShapBPT * **Model Type**: Black-box anomaly detection models * **Explanation Type**: Pixel-level / region-level feature attribution Authors ✍️ === | Sr. No. | Author Name | Affiliation | Google Scholar | | :--: | :--: | :--: | :--: | | 1. | Muhammad Rashid | University of Torino, Dept. of Computer Science, Torino, Italy | [Muhammad Rashid](https://scholar.google.com/citations?user=F5u_Z5MAAAAJ&hl=en) | | 2. | Elvio G. Amparore | University of Torino, Dept. of Computer Science, Torino, Italy | [Elvio G. Amparore](https://scholar.google.com/citations?user=Hivlp1kAAAAJ&hl=en&oi=ao) | | 3. | Enrico Ferrari | Rulex Innovation Labs, Rulex Inc., Genova, Italy | [Enrico Ferrari](https://scholar.google.com/citations?user=QOflGNIAAAAJ&hl=en&oi=ao) | | 4. | Damiano Verda | Rulex Innovation Labs, Rulex Inc., Genova, Italy | [Damiano Verda](https://scholar.google.com/citations?user=t6o9YSsAAAAJ&hl=en&oi=ao) | ### Sample

Keywords 🔍 === ShapBPT · Explainable Anomaly Detection · XAI · Image Feature Attributions · Shapley Values · Binary Partition Trees · ICPE 2026 · QualITA Workshop Citation === ```bibtex @inproceedings{rashid2026shapbptperspective, author = {Rashid, Muhammad and Amparore, Elvio G.}, title = {ShapBPT in Perspective: A Consolidated Review and an eXplainable Anomaly Detection Case Study}, booktitle = {QualITA Workshop, ICPE 2026}, year = {2026}, publisher = {ACM}, doi = {10.1145/3777911.3800638} }

Keywords 🔍 === ShapBPT · Explainable Anomaly Detection · XAI · Image Feature Attributions · Shapley Values · Binary Partition Trees · ICPE 2026 · QualITA Workshop Citation === ```bibtex @inproceedings{rashid2026shapbptperspective, author = {Rashid, Muhammad and Amparore, Elvio G.}, title = {ShapBPT in Perspective: A Consolidated Review and an eXplainable Anomaly Detection Case Study}, booktitle = {QualITA Workshop, ICPE 2026}, year = {2026}, publisher = {ACM}, doi = {10.1145/3777911.3800638} }Recommended citation: Rashid, Muhammad and Amparore, Elvio G. (2026). ShapBPT in Perspective: A Consolidated Review and an eXplainable Anomaly Detection Case Study. QualITA Workshop, ICPE 2026 (ACM).

Download Paper